Abstract

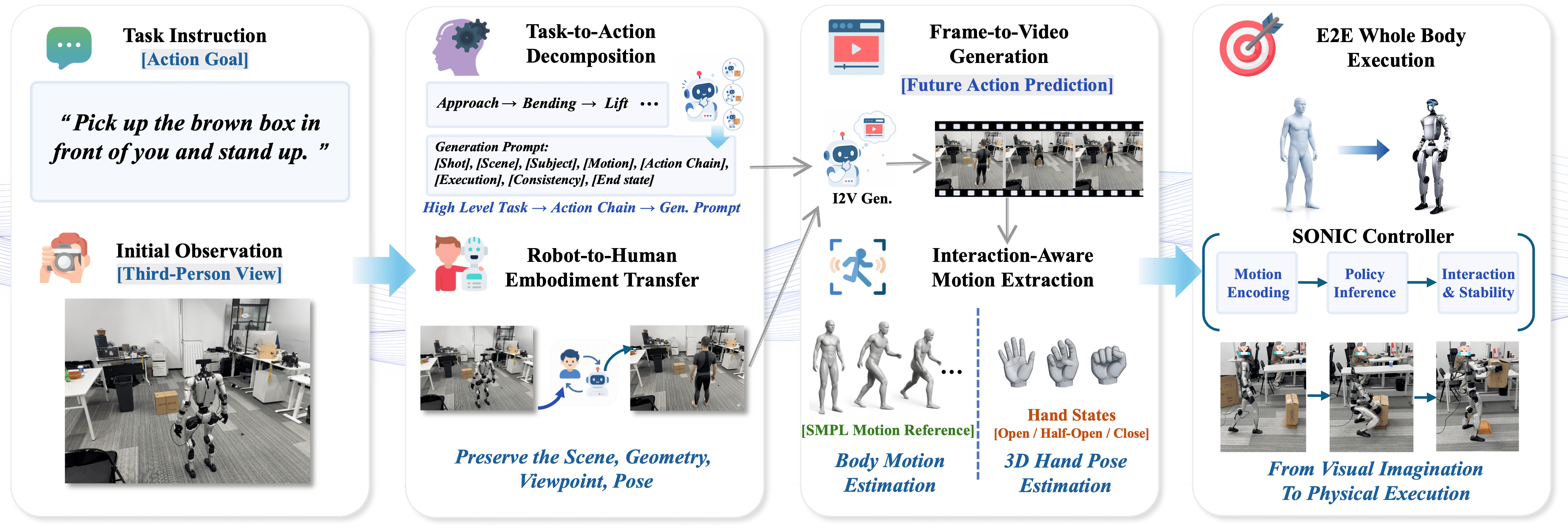

Humanoid control systems have made significant progress in recent years, yet modeling fluent interaction-rich behavior between a robot, its surrounding environment, and task-relevant objects remains a fundamental challenge. This difficulty arises from the need to jointly capture spatial context, temporal dynamics, robot actions, and task intent at scale, which is a poor match to conventional supervision. We propose ExoActor, a novel framework that leverages the generalization capabilities of large-scale video generation models to address this problem. The key insight in ExoActor is to use third-person video generation as a unified interface for modeling interaction dynamics. Given a task instruction and scene context, ExoActor synthesizes plausible execution processes that implicitly encode coordinated interactions between robot, environment, and objects. Such video output is then transformed into executable humanoid behaviors through a pipeline that estimates human motion and executes it via a general motion controller, yielding a task-conditioned behavior sequence. To validate the proposed framework, we implement it as an end-to-end system and demonstrate its generalization to new scenarios without additional real-world data collection. Furthermore, we conclude by discussing limitations of the current implementation and outlining promising directions for future research, illustrating how ExoActor provides a scalable approach to modeling interaction-rich humanoid behaviors, potentially opening a new avenue for generative models to advance general-purpose humanoid intelligence.

Method

1. Exocentric Video Generation

A captured third-person robot observation is first transferred into a human-like reference frame with Nano Banana Pro (Gemini 3.1 Pro), preserving scene layout, viewpoint, pose, orientation, scale, and robot-specific proportions. Step-wise action prompts then guide video generation models such as Kling 3 and Veo 3.1 to produce task-consistent execution clips.

2. Interaction-Aware Motion Estimation

Generated videos are translated into structured motion using GENMO for whole-body trajectories and WiLoR for bilateral hand states, yielding a synchronized representation of body motion, hand pose, and interaction state.

3. General Motion Tracking

The estimated motion is executed with a general humanoid motion-tracking controller, allowing the Unitree G1 robot to follow generated task demonstrations while maintaining dynamic stability.

Key Contributions

- We identify exocentric (third-person) video generation as a scalable paradigm for modeling interaction dynamics in humanoid control, leveraging the generalization capabilities of large pretrained video models.

- We propose ExoActor, an end-to-end framework that synthesizes task execution videos and directly converts them into executable humanoid behaviors via human motion estimation and general motion tracking, without task-specific data collection.

- We demonstrate the feasibility of this paradigm on real-world humanoid tasks, showing that generated videos can be translated into interaction-aware behaviors across diverse scenarios.

- We discuss key challenges and future directions, including physically grounded video generation, improved motion execution pipelines, integration with vision-based whole-body control, extensions to manipulation-intensive tasks.

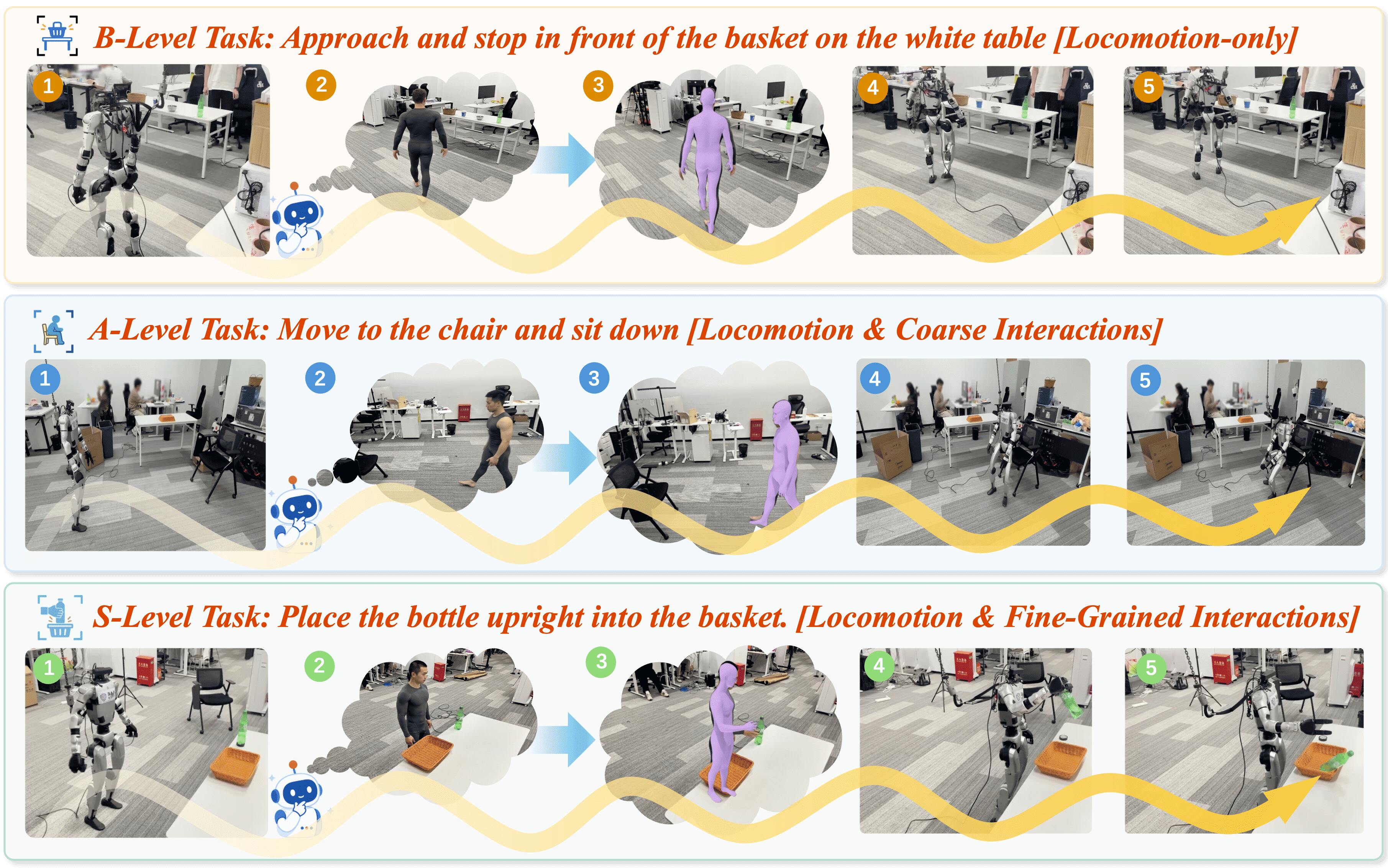

Image Overview

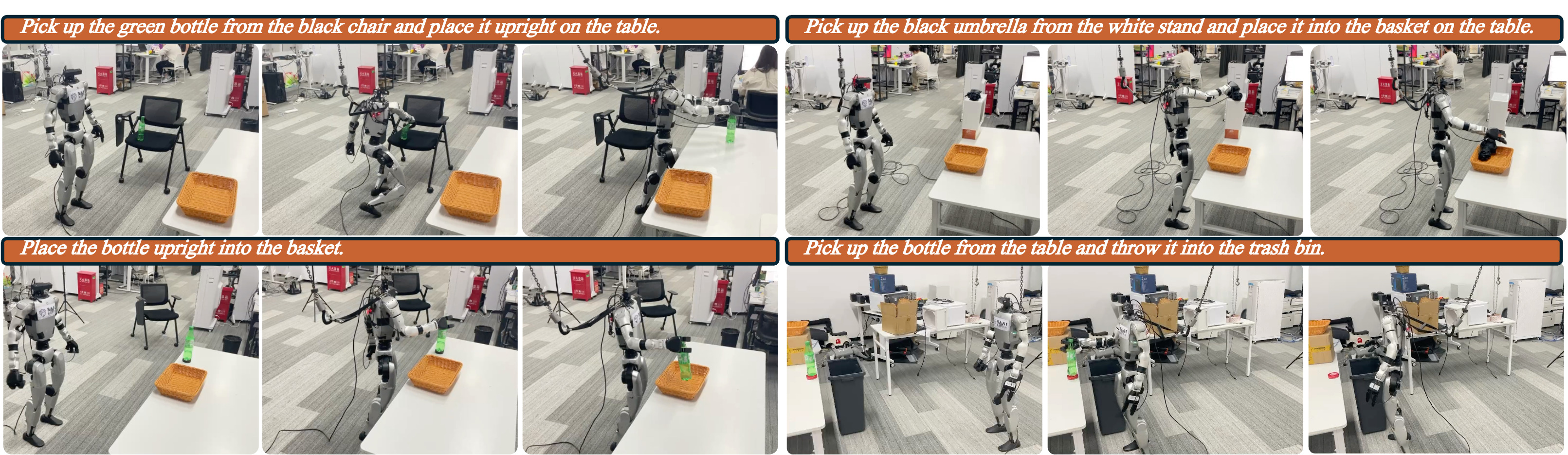

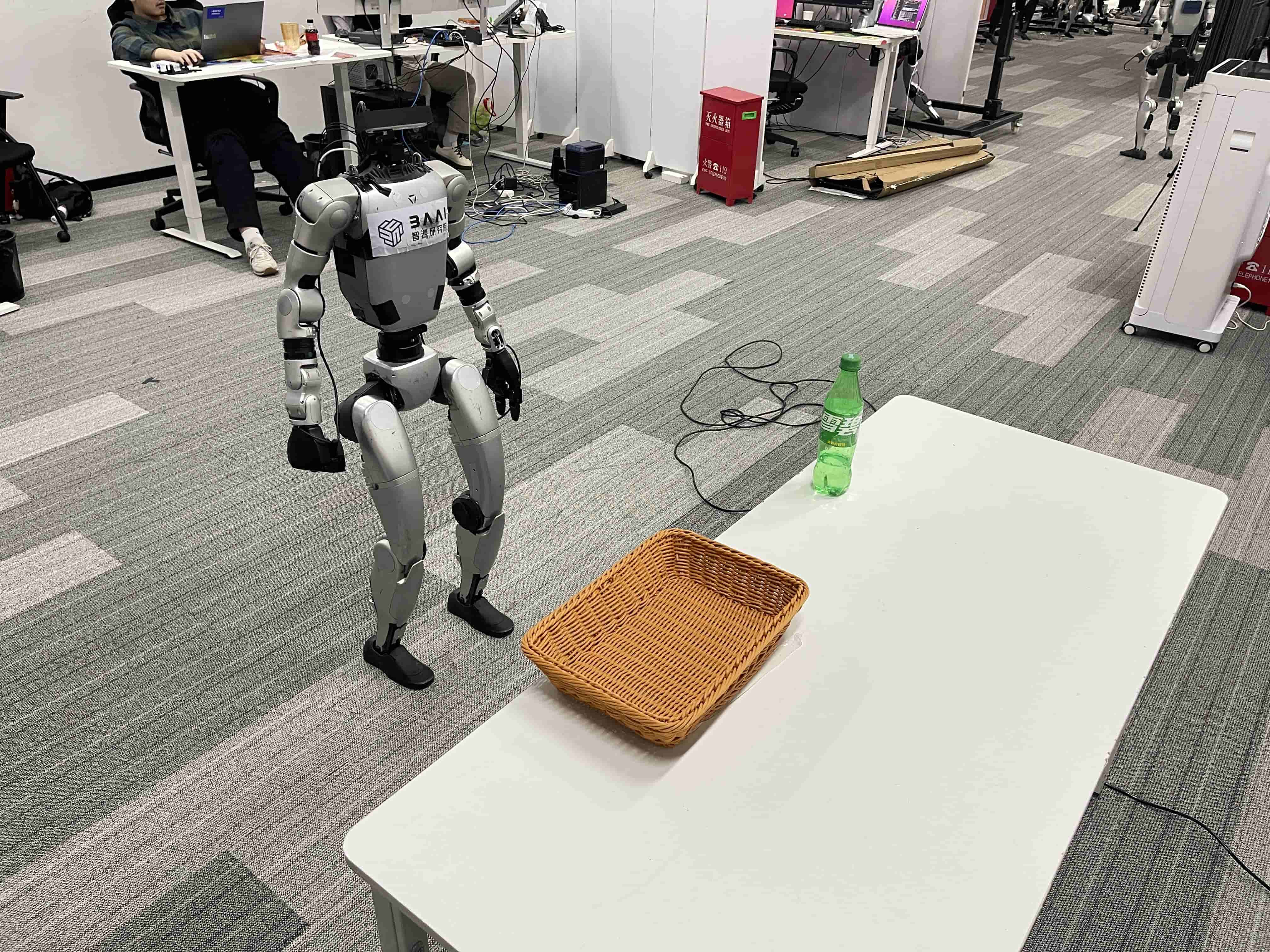

ExoActor capabilities across tasks of increasing difficulty, showing how exocentric video generation produces imagined demonstrations that can be converted into executable humanoid behaviors.

Overview of the ExoActor framework: task instruction and initial observation are transformed through embodiment transfer, action decomposition, video generation, motion estimation, and motion tracking.

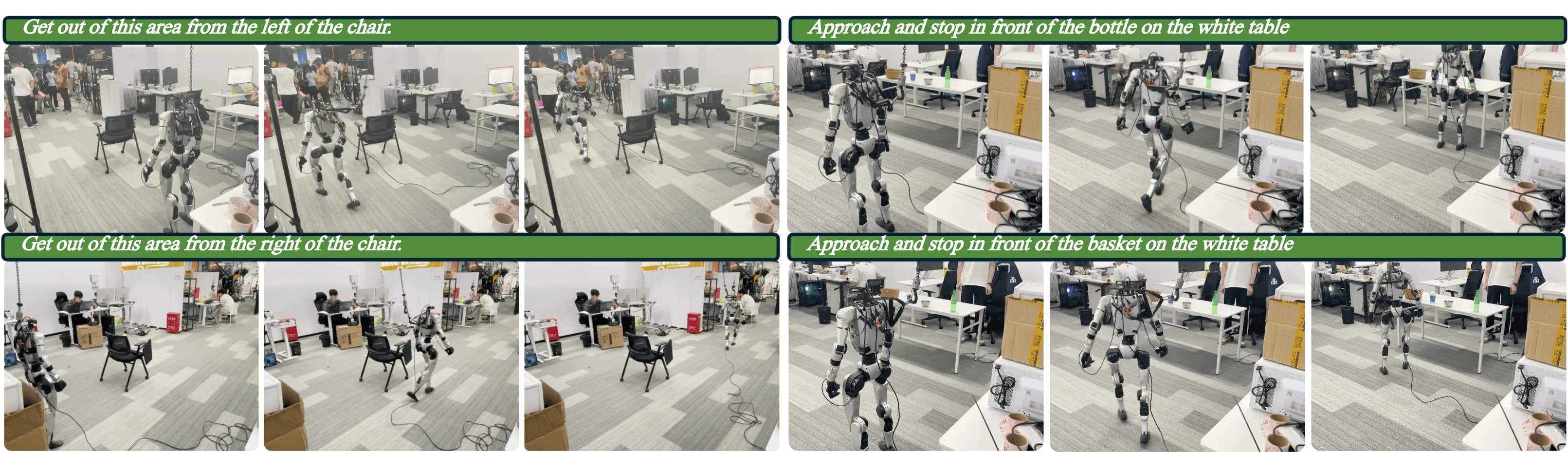

B-level (easy) cases focus on basic locomotion, goal-directed navigation, and simple obstacle avoidance with minimal interaction.

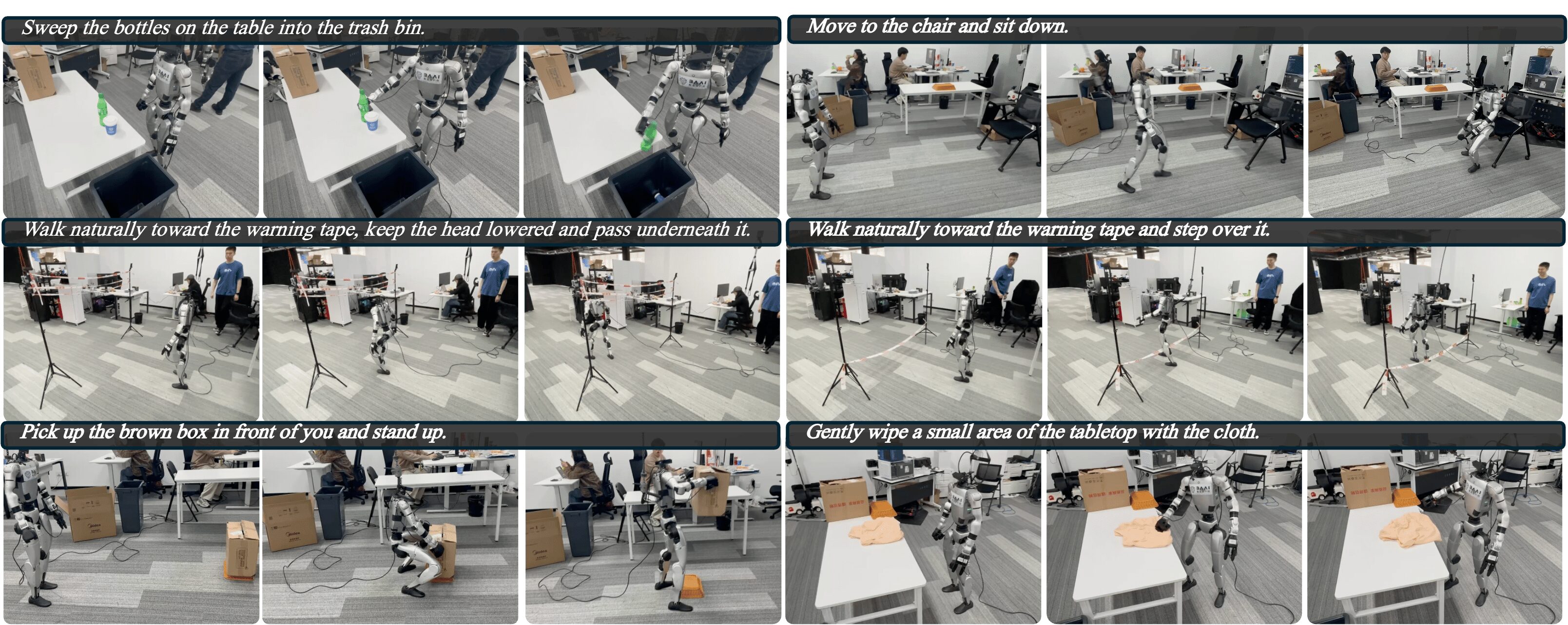

A-level (moderate) cases combine navigation with coarse whole-body interactions, such as sitting, wiping, lifting, or passing over and under obstacles.

S-level (challenging) cases require fine-grained, multi-step interactions and more precise coordination between locomotion and manipulation.

Pipeline Demonstration

Easy Tasks

Moderate Tasks

Challenging Tasks

BibTeX

@article{zhou2026exoactor,

title={{E}xo{A}ctor: {E}xocentric Video Generation as Generalizable Interactive Humanoid Control},

author={Yanghao Zhou and Jingyu Ma and Yibo Peng and Zhenguo Sun and Yu Bai and Börje F. Karlsson},

journal={arxiv: 2604.27711},

year={2026},

url={https://arxiv.org/abs/2604.27711}

}